AI Readiness Is the Next CS Maturity Question, and Most SaaS Companies Will Fail It

Two years ago, the AI value creation conversation in PE-backed SaaS sat with the CTO: Product velocity, engineering productivity, infrastructure cost. The mandate ran through the technology org and the metrics looked like deployment frequency, code throughput, and gross margin contribution.

Operating partners, boards, and CFOs have extended the question to every function with a P&L, sales has it, marketing has it, finance has it. Customer Success is also part of that, and the question is harder than it looks.

Most have CS functions have deployed AI at this point, license counts, vendor evaluations, and pilot programs show up on every quarterly review. The question PE operating partners are starting to ask is sharper: Where is AI driving measurable revenue, retention, or operating leverage inside the CS motion, and what structure confirms the answer?

Most organizations don't have a clean answer yet, and the reason isn't a CS leadership failure, the framework for measuring AI maturity inside a CS function hasn't existed. CS leaders have done the procurement work, but frameworks for measuring whether the spend produced outcomes haven't kept pace.

What AI Readiness Measures

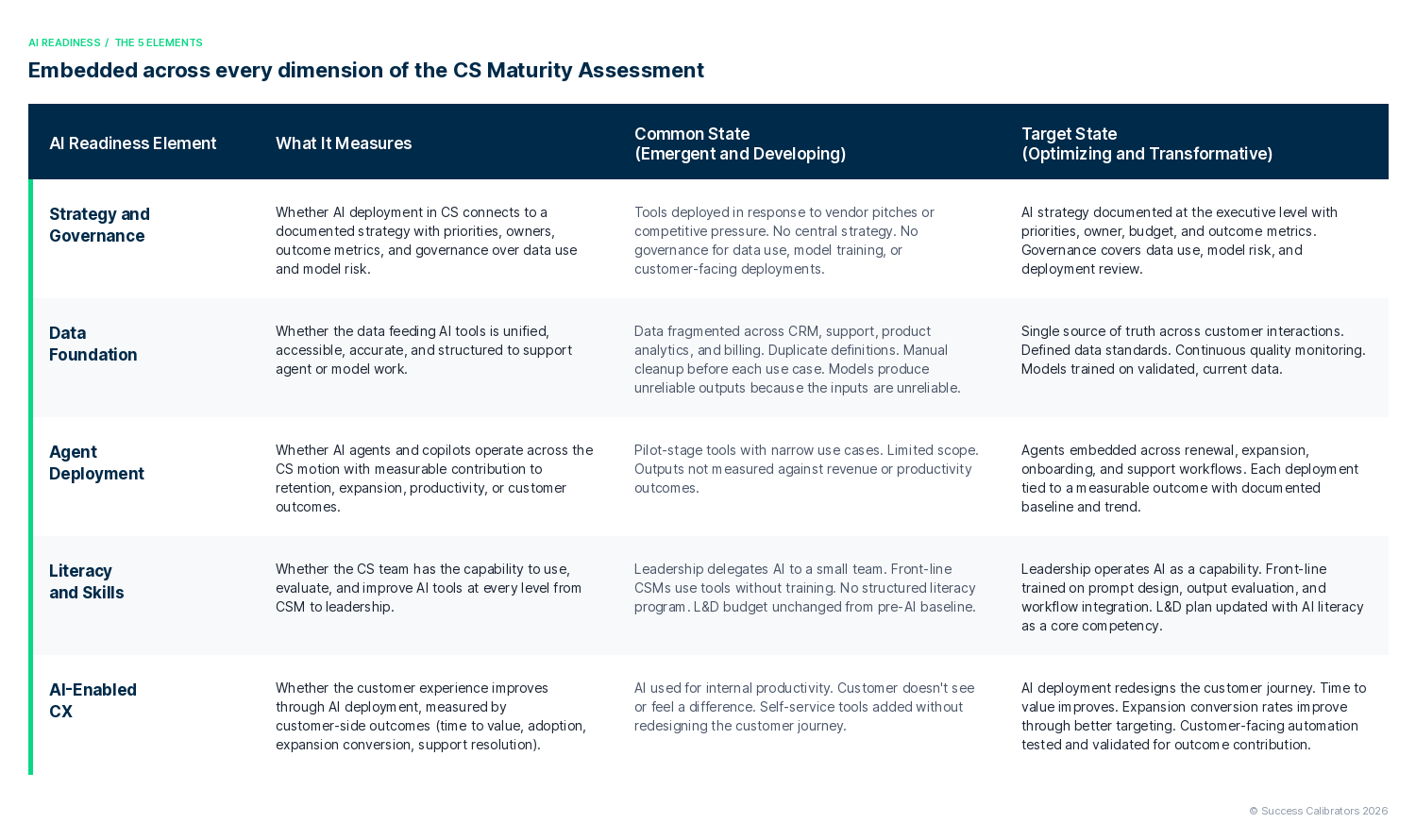

We've embedded AI Readiness as a layer across every dimension of our CS Maturity Assessment. The layer covers five elements, each measuring a different facet of whether AI deployment in CS produces real value.

The 5 elements of AI Readiness, embedded across every dimension of the Success Calibrators Customer Success Maturity Assessment.

The five elements work as a system, without a data foundation, the team uses tools no one trusts. Without literacy, even strong data foundation leaves CS unable to extract value from the work, with inconsistent adoption and utilization. Agents deployed without governance create customer-facing risk no one owns. AI-Enabled CX without strategic priorities leaves the team redesigning journeys without moving the metrics the business needs to move.

Why Most Companies Score Level 1 or 2

The pattern shows up across the assessments we run: Companies operating at Level 3 on the rest of the CS Maturity Assessment score Emergent or Developing on AI Readiness. Three structural reasons explain the gap.

The first is speed. Vendor pitches and product launches outpaced the frameworks for evaluating them. CS leaders made buying decisions in environments where the strategic question (what should AI do for this function?) had no organizational answer because no one above them had defined one. Leaders deployed tools because the alternative looked like falling behind.

The second is fragmentation. Each function bought point solutions for its own use cases. Support bought deflection tools, marketing bought scoring models, RevOps bought forecasting. The CS function inherited fragments of an AI stack rather than a designed system, and the data foundation underneath stayed siloed.

The third is the easy ROI trap. Support teams reported visible savings from case deflection. Companies measured the deflection rate, declared the AI investment a success, and moved on. The harder ROI lives in usage improvement, expansion conversion, time to value, productivity per CSM, and renewal acceleration. The structural work to measure those outcomes takes longer, and most teams skipped it.

The hackathon culture compounded the problem, leadership empowered teams to test tools, run sprints, identify use cases. Teams learned, but the learning sprawled across pilots without consolidating into a measurement framework. Twelve months in, many CS organizations sit on a portfolio of pilots with no consolidation, no measurement framework, and no path to scale.

What Changes When AI Readiness Lands on the Value Creation Agenda

Operating partners who include AI Readiness in the value creation conversation reframe the discussion for Customer Success leaders inside their portfolio companies. Their questions cover outcome contribution: revenue lift, retention impact, productivity gain, customer impact. License counts and pilot inventories don't carry weight in this version of the conversation.

For new acquisitions, AI Readiness belongs in post-sale revenue diligence. Older frameworks measured CS team size, NRR history, and platform spend without testing whether AI deployment was producing operating leverage. A target with strong NRR and weak AI Readiness signals a CS function still building the leverage to scale through the next phase of growth, and the gap shows up in the value creation plan as a foundation investment.

For existing portfolio companies, operating partners get a structured view of where the function sits, where the gaps are, and what the sequenced work looks like. CS leaders deliver measurable updates with documented progression, and the operating cadence improves because the conversation has a baseline to build from.

For CS leaders, the framework provides the structure to answer the question. Leaders walk into the room with a current-state score, a target-state plan, and a sequenced investment thesis tied to revenue outcomes. The conversation upgrades from defending tool spend to making the case for capability investment.

The Honest Timeline

The five elements progress at different speeds.

Of the five, leaders build Strategy and Governance fastest. With executive sponsorship, a CS leader builds the strategy document, names owners, defines outcome metrics, and establishes governance for data use and deployment review inside a single quarter. The constraint is making the decision.

Building Literacy and Skills takes two to three quarters. Leadership development, front-line training, and L&D plan revisions take time, and the compounding effect of a literate team grows over the following year.

Data Foundation is the longest lift. Unification across CRM, support, product, and billing, definition standards, and quality monitoring run twelve to eighteen months in most organizations. The investment overlaps with broader RevOps and data infrastructure work, and the returns extend beyond CS.

Teams scale Agent Deployment after the foundation is built, mature deployments require the governance, data, and literacy underneath to operate first.

Teams sequence AI-Enabled CX last. Redesigning the customer journey requires the agents to work, the data to flow, and the team to know how to test and validate the outcomes.

Leaders starting the work today, with executive sponsorship and a sequenced plan, see measurable AI Readiness improvement in two quarters and structural change in four. The compounding effect on retention, expansion, and CS productivity shows up in the financial statements over the following twelve months.

Sequencing Doesn't Mean Waiting

The timeline above describes foundation work, CS leaders need to run experimentation in parallel. Strategy documentation and data unification take quarters, and teams pausing AI experimentation while the foundation gets built lose credibility with their own leadership, fall behind on learning, and arrive at the deployment phase without the operator instincts the work requires.

Foundation work and experimentation run together, while leaders build the strategy document, teams test hypotheses on specific agent use cases, run small-scope pilots, and measure outcomes against documented baselines. The discipline is simple: each experiment has a defined scope, a hypothesis, an outcome metric, and a kill criterion. Without those guardrails, teams produce the fragmented pattern from earlier.

The five elements work as guardrails for parallel experimentation. Literacy training starts in quarter one, ahead of strategy sign-off. Leaders draft governance frameworks and apply them to early experiments. Teams monitor data quality on the sources current pilots depend on, while building the unified architecture underneath.

The pacing question for CS leaders is how to keep experimentation contained enough to produce learning, with a measurement framework documenting the lift, the loss, and the lesson from each pilot.

The Starting Point

CS leaders walking into a value creation conversation about AI need a structured answer. Our CS Maturity Assessment with AI Readiness embedded provides one: a current-state score across the five elements, a target-state plan tied to maturity progression, and a sequenced investment thesis boards and operating partners fund with confidence.

Combined with the Churn Tax Diagnostic, which quantifies the full financial cost of churn at 1.5 to 2.5x the reported rate, the assessment connects AI Readiness to the revenue protection thesis the rest of the business case rests on. AI Readiness sits inside the Capital Allocation conversation, where retention investment continues to lose to acquisition spend even as the math gets harder to defend.

If your operating partner, board, or CFO is starting to ask the AI Readiness question, a structured answer earns the next round of investment.

Contact us to discuss a CS Maturity Assessment with AI Readiness for your organization →